In this blogpost, Tim Cowlishaw, Katrin Fritsch and Michelle Thorne introduce quadrants for navigating positions on AI.

1. Why we made these quadrants

The conversation around AI can be overwhelming, and as concerned humans confronted with the unprecedented acceleration of this phenomenon, we found ourselves looking for ways to find orientation, support our own critical thinking, and develop shared language for this topic.

At the Green Web Foundation, we often meet technologists and people who care about sustainability and want to use digital tools responsibly. For example, participants in our AI briefings ask, “Should we use AI at all? What kind of AI models are available that are less extractive and more sustainable?”

These questions have been difficult for us to answer simply, because they are choices about tools that exist in very unjust and unsustainable contexts.

In our experience, discussions tend to focus on the pros and cons of different kinds of artificial intelligence applications and the merits of using various providers. But for people making organisational decisions about these technologies, what is often absent is an analysis of the political economy of these tools and how their creation and usage can deeply harm people and ecosystems. Or, there is a recognition of these dynamics, yet a perceived distance between an individual choice and collective impacts, or a rationalising of tradeoffs. (“If I don’t use it, my competition will and I’ll lose.” “What difference does my usage make?” “But I’m using these tools for good.” “I’m picking the least bad provider.”)

We realised that it would be helpful to have a way of talking about choices that is not just a binary of “adopt or not”. This approach is reflected in our organisation’s work, from our fellowships to joint statements with civil society and commissioned reports on AI greenwash: there is a need to call out the systemic harms of AI systems, to articulate redlines, and to continually position technical choices within a larger political economy.

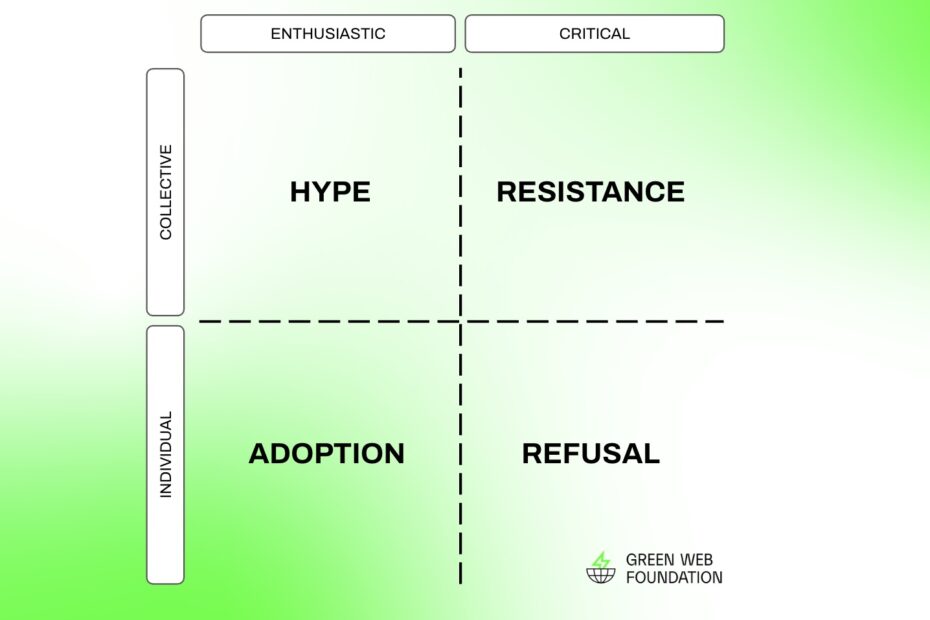

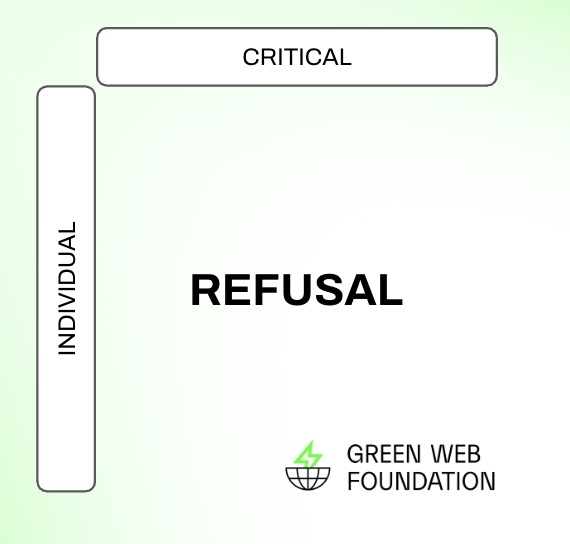

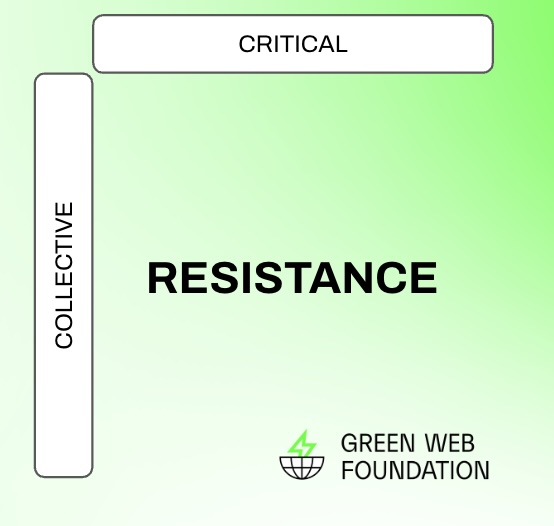

We began sketching these quadrants. It has two main axes: “enthusiastic” <-> “critical” and “individual” <-> “collective”. This results in four broad discourses on AI with different attitudes: adoption, hype, refusal, and resistance. In this blog post, we unpack this diagram and share how it’s helping us inform our work.

Importantly, most conversations about AI are not static. One might begin in a specific position, and upon learning something, you might move to another.

We want to normalise and destigmatise the process of changing our minds.

Each of us writing this article have been at different corners in this quadrant at different times and for different reasons, and it is thanks to respectful exchanges that we’ve learned things and changed our minds. Real dialog is about aliveness, about being in relation with each other and with knowledge, in an ongoing way. It’s in that spirit we share this post!

2. Adoption, Hype, Refusal, and Resistance: Tour of the Quadrants

You have for sure been in a conversation which is framed as “why to use AI or not.” There’s little doubt that responses to this question fall along a spectrum of “enthusiastic” to “critical”. However, in our own exchanges, we realised that only looking along this single axis hides another aspect, which can be just as useful to think about. It was difficult to find the right framing for this second axis, but we settled on “individual” to “collective”, meaning whether the consequences of AI and our responses to it are understood at a more individual or more collective level.

By considering how these two axes interact, we found ourselves having richer and more generative (ha!) conversations. Rather than narrowly defining decisions as “use or don’t use”, this diagram opens up other possible attitudes to AI: not just a question of Adoption, but also of Hype,Refusal and Resistance. Let’s look at each of these more closely.

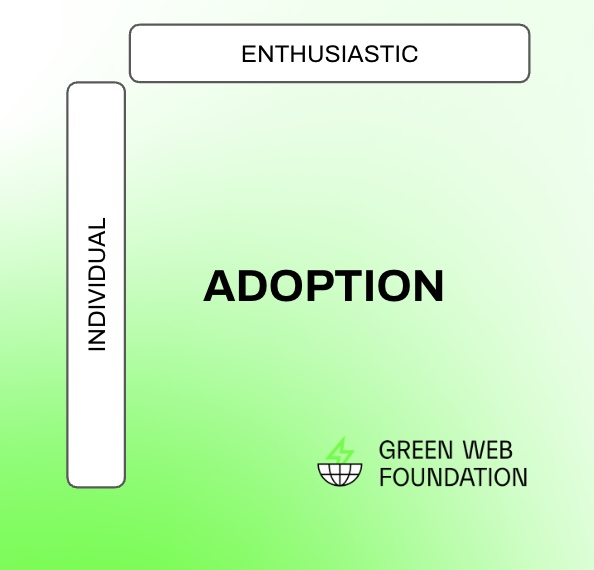

Adoption

Popular ideas about technological progress tend to follow the linear model of innovation—the idea that technological progress is simply the result of applied scientific discovery and a continuously unfolding result of “progress”. This normalises new technologies as being inevitable and natural. It suggests that AI is simply a tool to be adopted, and sees AI as something which must inevitably be adapted to. It suggests that these technologies are the result of a force outside our control to which we must adapt to regardless of whether we personally approve of or desire them.

Common sentiments here are: “Everyone is doing it, so I will, too”. “This is the future – I don’t want to miss out (or be left behind)”. “AI has been great for me!”. It’s an attitude to modernity reflected in the words of Kurt Vonnegut, “You can’t fight progress. The best you can do is ignore it, until it finally takes your livelihood and self-respect away.”

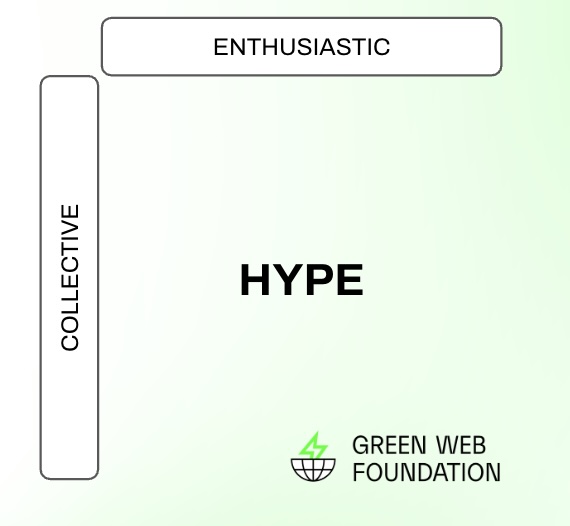

Hype

If AI isn’t simply the result of some idea of linear techno-scientific progress, then what is it? Andreu Belsunces characterises it as Deep Hype: a type of “technological overpromising that differs from conventional hype in a crucial way: it is projected into the long-term future by making grandiose promises of civilisational transformation.”

Hype is the top left quadrant on our schema, which treats AI as both a collective political project and adopts a position which is broadly uncritical and in favour of it. Hype, for Belsunces, is a form of sociotechnical fiction: a performative gesture which legitimises and brings about particular visions of the future. In this case, the future of a world mediated by AI technologies.

These fictions need not be positive or utopian. The dystopian, Terminator-evoking warnings of the“potentially imminent, almost unimaginable power” of Artificial Intelligence from AI doomers and effective altruists also serve to normalise the idea that a ‘more tamed’ form of AI will inevitably be a part of our future. We can also see that these stories have material effects. Hype about AI leads to specific policy and technology decisions which make them a self-fulfilling prophecy: the way in whichthe unquestioned need to power AI data centres is presented as a justification for growing nuclear capacity is one good example.

Therefore, exposing the rhetorical work of hype from those who have a political interest in the deployment and further development of AI helps illustrate the way in which AI is already a collective concern. It also highlights that recognising these political qualities does not necessarily mean opposing them, and starts to call into question the idea that AI is inevitable, simply the unavoidable result of technological “progress.”

Refusal

Refusing something—in this case, the use, and adoption of AI—doesn’t (necessarily) require a coherent understanding of its political dimension, but simply a commitment to be hopeful and wilful in the face of an unwanted technological imposition. “To refuse is to say no”, writes the anthropologist Carole McGranahan, “but it is not just that. To refuse can be generative and strategic, a deliberate move toward one thing, belief, practice, or community and away from another.”

Refusal in this definition has some important properties. Firstly, it’s deliberate and active. It’s a way of asserting our agency over technology and our relationship to it, of reminding us that we still have a choice about how and whether we engage with it. Secondly, while based on individual action, it also has a social component—it’s a pathway to finding community and strengthening collective resistance. By refusing AI we ally ourselves with others doing the same and can share practices and strategies for doing so, even if their specific political goals, convictions or concrete demands don’t align with our own—an instance of “One no, many yeses”.

Taking an attitude towards AI that’s both active and social has another consequence. In refusing AI as a technology, we also refuse to accept the idea that any technology must be accepted as the inevitable result of progress or that we are simply passive consumers of it. In refusing, we take an active and agentic (ha again!) role in the particular futures we want to see. Refusal is plural. Violet Fox writes on AI refusal in libraries that it “refers to a spectrum of approaches to AI”, ranging from outright refusal to engage with it, tousing scare quotes around “artificial intelligence” when we talk about it.

We can see examples of refusal in Professor Nicole Holliday’s statement to her students on “Why Professor Holliday doesn’t use generative AI”, and the way in which Superrr Lab refuses to only talk about AI and instead anchor it within other intersectional topics. We could point also to Ketan Joshi’s statement on his website that “For all of my work, I don’t use generative tools for text, images or videos, or as a reference point or for knowledge retrieval, due to the stack of ethical, environmental and inaccuracy issues that plague them.”

In making these sorts of commitments, and making them publicly, we learn that other ways of living with technology are possible.

In positions of privilege, by making refusal public, space and support can be created for those who may not be in a position to do so: showing that we don’t have to accept particular technological developments, like AI, as inevitable.

Importantly, this also shows a spectrum of possibilities of how to refuse: the circumstances and levels of autonomy and privilege of each individual might offer different opportunities for refusal, all of which are valuable.

Refusal, therefore, is both a powerful technique in itself for individuals to engage critically with AI and other new technologies, and also a path on the way to a more explicit, collective resistance.

Resistance

The final upper right quadrant on our chart is a position which both refuses adoption of AI and explicitly recognises the struggle against it as a collective one. It is Resistance. This is a position much closer to the historical view of Luddism described by E.P. Thompson as “a manifestation of a working-class culture of greater independence and complexity than any known to the 18th century.”

Resistance is coordinated and explicitly political. It is both a response to a systemic injustice and is explicitly normative. It reflects a belief that the world should be a certain way, not just for us but for all.

The ideas of Luddism are seeing a resurgence in response to the deployment of AI, inspiring a movement of “informed, skilled, conscientious technologists”. This position is both critical of AI, and aware of its status as a political project more than a simple technology in the common sense of the term. Projects, people, and collectives who practice resistance to AI might include for instance data-centre resistance groups such as Tu Nube Seca Mi Rio, the 2023 WGA strike, and the Authors’ Guild class action lawsuits.

This position also, to some extent, reflects our own: we see AI as a political project which requires a collective response and are broadly critical of it.

3. Collective Impacts of AI and How That Influences Our Positioning

In our discussions with technologists and policymakers, we often aim to travel from “adoption” to “resistance.” A first step is to highlight the ways AI is already a political project, then explore the collective stakes that are worthy of opposing. In our own positioning, we have found it powerful to understand how AI situates it in larger social concerns, for example, how it affects labour rights, climate justice, women’s* rights, as well as anti-racist and anti-classist movements.

A non-exhaustive list of these collective impacts:

Labour:

AI in its current form is not an output from a single computer. It requires training, moderation and labelling of content by thousands of workers. These ‘data workers’, most of them based in the Majority World, face extremely precarious working conditions: they have to label data fast, work overhours, make difficult content moderation decisions, are traumatised by watching violent content, are paid poorly, and have to fight for basic workers’ rights.

Furthermore, AI runs on hardware, which requires intensive manufacturing and mining – both sectors with horrible track records for labour conditions. The application AI is justifying a new wave of deskilling and job loss, thanks to the managerial class embracing automation and generative AI. Meanwhile, there have never been more billionaires in the world, and unprecedented economic disparities persist between the people whose labour feeds these tools or is replaced by it and the people who profit from it.

Environment and climate:

The AI boom requires the expansion of data centres, which sets a high (and inflated) energy demand. It is estimated that in the USA alone, gas power plans have tripled to meet the data centre energy demand in the last two years. As a recent report by climate writer and analyst Ketan Joshi found, the question on AI for climate has been a greenwashing strategy with little to no evidence of any positive climate impacts. AI is also used for the exploration and extraction of more oil and gas reserves. Data centres also cause health issues: In Memphis, local residents complain about lack of water and that they ‘can’t breathe’.

Additionally, there is noise pollution from onsite gas turbines of data centres. The tech supply chain intensifies the search for more materials like lithium, which displaces local communities as in the Atacama desert. Illegal mining pollutes water and the environment while the reliance on rare earth minerals causes political instability, for example Trump’s threat to invade Greenland in early 2026. (It is estimated that Greenland has 25 of 34 ‘critical raw minerals’ as well as significant oil and gas reserves.) Hardware is subsequently thrown out at an unprecedented pace and scale. Globally, electronic waste is the fastest growing waste stream in the world.

Information Ecosystems:

AI is trained on data from the past. It is built on the assumptions and politics of the people who created it and the data they obtained, in several cases illegally, to train it. “Hallucinations” are an inextricable part of how generative AI functions. Encoding and reproducing old biases at an intense scale affects our ability to articulate and enact more meaningful, just futures.In Silicon Valley, diversity initiatives have vanished rapidly since the second Trump inauguration further entrenching a monoculture of who shapes these tools.

Racism and sexism continue to be major issues in generative AI, for example with hundreds of nonconsensual sexualised images being created on Grok. AI produced content is flooding the internet, leading to a massive increase in mis- and disinformation and a cognitive overwhelm on humans to distinguish between what is true and false. AI slop threatens arts and cultural work by undervaluing these professions and used to justify divesting from them.

Geopolitical dominance:

AI is a convergence of political, financial, and technical powers that rely on social relations and material impacts to function. It is also a term that is used to flatten a lot of nuance. In many ways, AI is a continuation of an older political project. Some of its key underlying concepts of calculation, categorization, prediction, and control have shaped the world for centuries. Today geopolitical narratives about AI are ramped up to project dominance, solidify monopolies and push for growth.

Karen Hao calls an “Empire of AI” the political tool to conquer, repress, expand, and extract under the banner of ‘inevitable progress’. Many states are pursuing versions of this, for example the USA inflating the AI industry to achieve “a specific vision of imperial and expansionist [state] power” as journalist Brian Merchant describes, while evoking the threat of an “AI arms race” with China to legitimize its dominance.

Taken together, these collective impacts cause us great concern and motivate our response. What impacts worry you? How are people and communities you care about affected? What do you choose to ignore so that your choices are more comfortable?

4. Conclusion: Crossing the boundaries

The four quadrants aren’t mutually exclusive. We’ve already suggested that there’s a fuzzy boundary between “refusal” and “resistance” due to the way acts of individual refusal can lead to the generation of alliances and solidarities within which more organised resistance can be practiced.

Similarly, we see the in-between points on these axes offering some promising and constructive ways to critically engage with AI. Refusal of AI can be partial and selective, and in every pause, space is created to challenge “the inevitable.” What might this look like?

TheFrugal AI movement attempts to balance computational power with minimal resource use. Speculative interventions such as Tega Brain’s Slop Evader or Vytas Jankauskas and colleagues’ Latent Intimacies project take an approach that lets us “stay with the trouble” and engage with AI in a non-innocent way. These interventions can also take place on an entirely personal scale, such as Henry Cooke’s attempt to build and use the worst-possible AI server, or form part of publicly available, production applications, such as Fablab Barcelona’s Distributed Design Learning Hub. By refusing the wholesale adoption of AI-as-it-exists, we can consciously and selectively determine conditions that would be acceptable for adopting it, if at all.

Through these pauses, we create space for more restorative, regenerative, and just ideas to get more oxygen and the composure for more organised resistance.. This diagram seeks to offer a situated view of AI. In our work, it acts as a compass (not a map) by helping articulate our own position better and find meeting points and shared directions of travel. We hope it also identifies opportunities to build solidarities with people whose interests and struggles may not be the same. “Solidarity,” says Sara Ahmed, “does not assume that our struggle is the same struggles, but a recognition and a commitment to the idea that we live on common ground”.