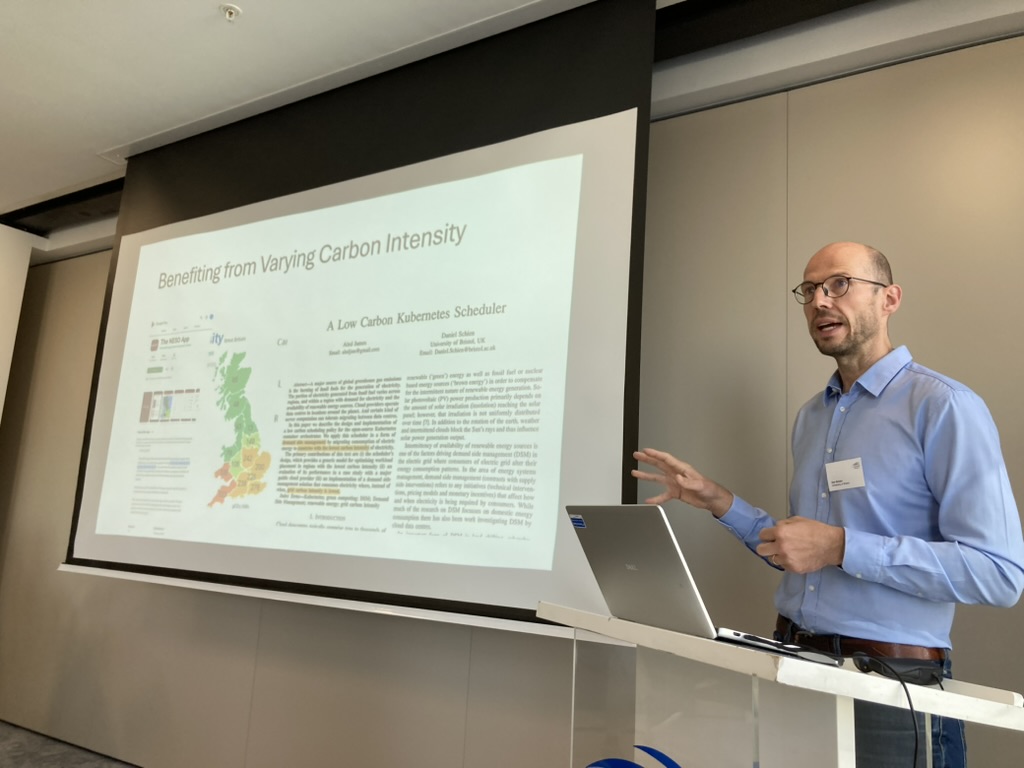

Late last year, our Director of Technology and Policy, Chris Adams was invited to a day-long workshop on AI Demand Flexibility for Grid Decarbonisation, run by the University of Bristol at the Carbon Trust offices in London, with a number of industry domain experts, academics, and policymakers. While the insights from the workshop are being processed and turned into a number of research papers, given the heightened interest in datacentre buildout around the world, we’re sharing a few takeaways from the event that shaped our thinking on the subject, and hopefully will be useful for others in the meantime.

If, like us, you care about a fossil free internet, then at some point you have to think about how the datacentres that all the sites and apps we use run on actually interact with the underlying electricity grid. A fossil-free electricity grid powered by mostly renewables works quite differently to one powered by a fossil fuels, and because datacentres themselves frequently draw significant amounts of power from the grid, it makes sense for the facilities themselves to be designed in a way that’s complementary to the changes taking place at the grid level.

We’ve written about this subject at length, and published various software libraries in multiple programming languages, like javascript and go, to make it easier to design systems that respond to detectable changes electricity grids like their changing carbon intensity. But at the same time, the whole idea of digital infrastructure responding to the grid itself is still a relatively new and poorly understood field.

So we were happy to be invited to a workshop in the UK organised as part of the CANDISE (Change-Oriented Assessments for Net-Zero Digital Services) research project, that focused on precisely this subject in September, and we’re grateful that they covered our travel and accommodation.

Also, last week, the UK government announced a new consultation on their plans to reform the way large consumers of electricity like datacentres connect to the grid, and in the face of legal challenge is now revising its own national policy statement about datacentres, with a new one expected in the coming weeks.

Both of these are likely to change the terms under which datacentres are granted grid connections in the UK, so with that in mind, it seemed timely to share some of the key things we took away from the event, that seemed worth sharing to wider audience.

1. What counts as datacentre flexibility depends on whose point of view you take

If you have been following our work over the last few years, you might have come across the idea of flexibility in data centres in the form of grid-aware or carbon-aware software. At a very high level, when we’ve discussed it here, it’s generally referred to the idea of scaling the amount of computation taking place, in response to a share of fossil fuels currently being used to generate electricity on the grid.

When the grid is dirty with a high share of fossil fuels, software you write would scale things down to minimise the amount of energy-consuming computation, and avoid emitting too much carbon pollution. Conversely, when there are a lot of renewables on the grid on a sunny or windy day, your software could afford to do more work, and still cause less carbon pollution, because the electricity your code ultimately runs on would be comparatively clean. As a bonus, when you have lots of renewables on the grid, the cost of energy is frequently lower, or even negative, meaning that instead of paying for power, you can be paid to consume power.

This is very much an approach taken from the point of view of someone writing software, and using the amount of computation as the main lever for change, rather than someone operating a datacentre, or even an electricity grid.

From the perspective of a grid operator, getting people to get servers to do less work is one way to reduce the demand they need to find electricity supply for, but it’s not the only one. When the grid is under stress, another way to get large consumers of electricity to ease pressure on the grid is to get them to run on alternative sources of power instead of getting it from the grid. In an ideal world, this kind of flexibility would come from clean, zero-carbon sources, but a lot of the time, relief for the grid can instead come from large consumers of power switching to run on on-site fossil-fuel powered generators, instead of drawing power from the grid.

This is still flexibility, and it still reduces the demand on the electricity grid, but it’s no longer scaling back computation demand as much switching to a different power source, and if you’re burning diesel or gas to generate electricity, you’re likely emitting more carbon pollution, not less.

A concrete example

Let’s assume you’ve have a web application, that you need to deploy into the cloud somewhere so it can be used by other people, and you use one of the market-leading cloud services – Microsoft’s Azure cloud service.

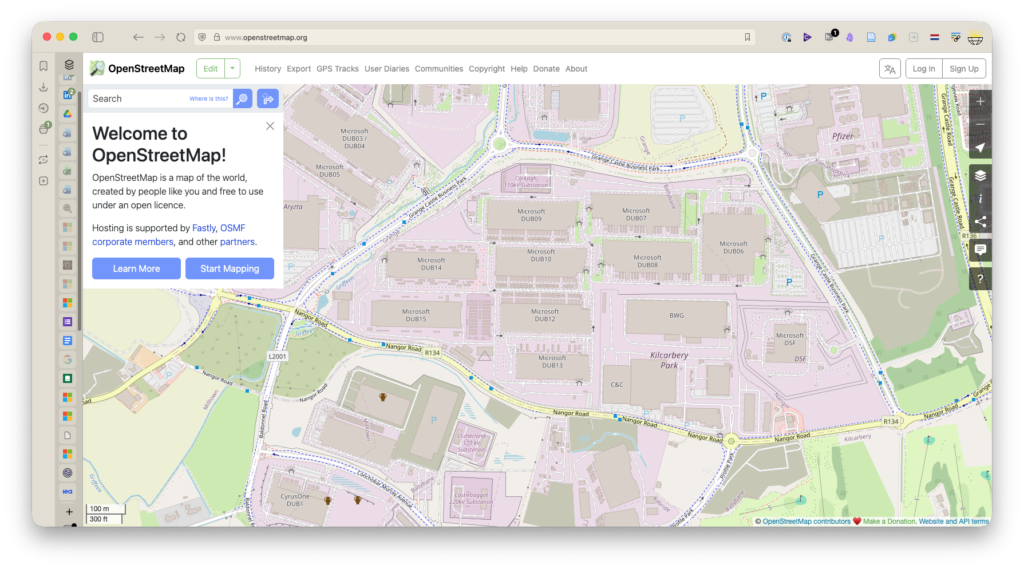

Like other cloud providers, Microsoft lets you choose where to deploy your code, and you choose to deploy it into one of larger cloud regions, their northeurope cloud region, which is based in Ireland. Microsoft only really has one cloud region in Ireland, and the cloud name is a bit misleading – on the ground it looks like cluster of large buildings, in West Dublin, which you can see fairly clearly labelled on OpenStreetMap:

northeurope Azure cloud region in West Dublin, Ireland.When cloud giants like Microsoft talk about Carbon Aware software in their own training materials, you’d be forgiven for thinking that if you design software a specific way, it’ll be your changes to software that are directly impacting the pressure a datacentre places on the grid at times of stress.

This may have an impact, it’s also worth taking a moment to see what is actually being built on the campuses housing all these servers too. Among the buildings hosting rack upon rack of computers, you can now see clusters of fossil-gas powered engines under construction, intended to provide in the region of 170 Megawatts of extra power generation. This is not backup power only expected to be used in an emergency – this is power that is intended to be switched on when the grid is experiencing peak demand, for up to 8 hours a day, all through the year.

How can we tell this generation is designed for this use? It’s literally described in the permits submitted to the Irish Environment Protection Agency, the EPA, that are also linked on Open Street Map. Heck, you can even see them on Google Street View, just like you could if you were driving by the facility. You can see this as a response to policy from government, and the Ireland’s recent guidance on allowing new datacentres to connect to the grid can very much be seen as encouraging more of this in future.

This ended up being a key takeaway for us – we’ve used the term grid-aware in some of our work, but terms like datacentre flexibility, or grid-aware can mean quite different things to different people. As ever, this raises all kinds of questions about transparency, and being able to independently verify the impacts being claimed from software interventions. Unsurprisingly, data transparency for green claims ended up being one of the recurring themes of the day.

(NB: As a side note, this is a good example of why we talk about hourly, rather than annual clean energy matching in our new verification criteria we’re introducing this year. Microsoft recently celebrated reaching a milestone of 100% of their electricity demand being met with renewable energy purchases, but as we can see, you can be 100% annually matched whilst still burning masses of fossil fuels all through the year.)

2. There is an unresolved on-site fossil gas loophole for datacentres in the UK

Another insight that came up during the workshop was also related to where grid flexibility comes from when we walk about software, datacentres and decarbonising the grid.

This workshop took place in London, and the UK is a particularly interesting country when talking about how datacentres interact with the grid, because it is one of the world’s largest economies (typically ranking 5th or 6th by GDP globally), it has a huge pipeline of planned datacentre projects, and since the current government launched its Clean Power 2030 plan, it is also the largest economy in the world to aim for an almost entirely decarbonised electricity grid by 2030.

We wrote before about the UK’s pipeline of datacentre projects, where some are now in the order of hundreds of megawatts in size – which in electricity demands terms is comparable to some small towns. Projects this big are not easy to integrate into electricity grids, and this is leading to some projects waiting in connection queues for years. Faced with this, a number of datacentre operators have been lobbying the UK government to be allowed to connect to the UK’s gas grid as well, like we saw with the Irish example above. As we saw in Dublin, a goal here is being able generate electricity onsite to either supplement, or replace the power they draw from the electricity grid.

This raises an important question. If you’re generating your own power to run a datacentre, and the country you’re in has a target to run on a decarbonised electricity grid, does the fossil gas you burn count towards that national target?

While this came up during the day, it wasn’t clear answer what the official answer was.

On one hand, when the UK’s Clean Power 2030 target was set, the UK’s own North Sea gas fields were in terminal decline, with economically viable supplies due to run out around 2030. Getting off gas is much easier when your own reserves are drying up, and the UK, like a growing number of other countries has binding legal targets to reduce national carbon emissions, thanks to laws like the Climate Change Act 2008. If you definitely need to hit a emissions reduction target, reducing reliance on gas is a good idea, and not just because of the emissions that come from burning it.

You can obviously reduce carbon emissions by reducing the gas you get out of the ground and burn for energy, but even if you don’t, and you just replace domestic gas supply with steadily declining amounts of imported gas from other parts of the world, you introduce new sources of uncertainty in being able to reach your own climate targets.

Just getting gas from somewhere else in the world to where you need to burn it can cause wildly differing amounts of global warming by itself, because methane gas is such a powerful greenhouse gas even if you don’t burn it. If it gets into the atmosphere, it has at least 60 times as strong a global warming effect as carbon dioxide over the time frame relevant for UK Net Zero targets, and it easily can leak from pipes in transit, from ships when being transported and so on. So this is another reason getting off gas is a good idea – reducing reliance on gas makes progress to an important target written into law more predictable, because your supplier’s ability to manage leaks in their infrastructure no longer affects the life-cycle carbon emissions of the energy you rely on. See this chart to see how the source fossil gas from affects the amount of warming caused – you don’t need much leakage for gas to be nearly as bad as coal climate-wise.

When we look at it this way, if getting off gas is a key part of your strategy to decarbonise how you generate electricity, then whether datacentres get energy from the grid or from burning gas themselves shouldn’t matter – the goal is still decarbonisation.

On the other hand, big audacious policy goals like Clean Power 2030 were presented as being about the electricity grid. While greening the UK’s electricity to get off gas would help meet national climate goals, it’s not nearly the climate win it would be if carbon emissions from datacentres just get moved off the electricity grid and onto the gas grid instead.

3. Economic incentives help explain renewed interest in datacentre demand flexibility

The final takeaway for the day for us was yet another way people see grid-awareness, and datacentre flexibility, in the context of climate goals and greener digital services.

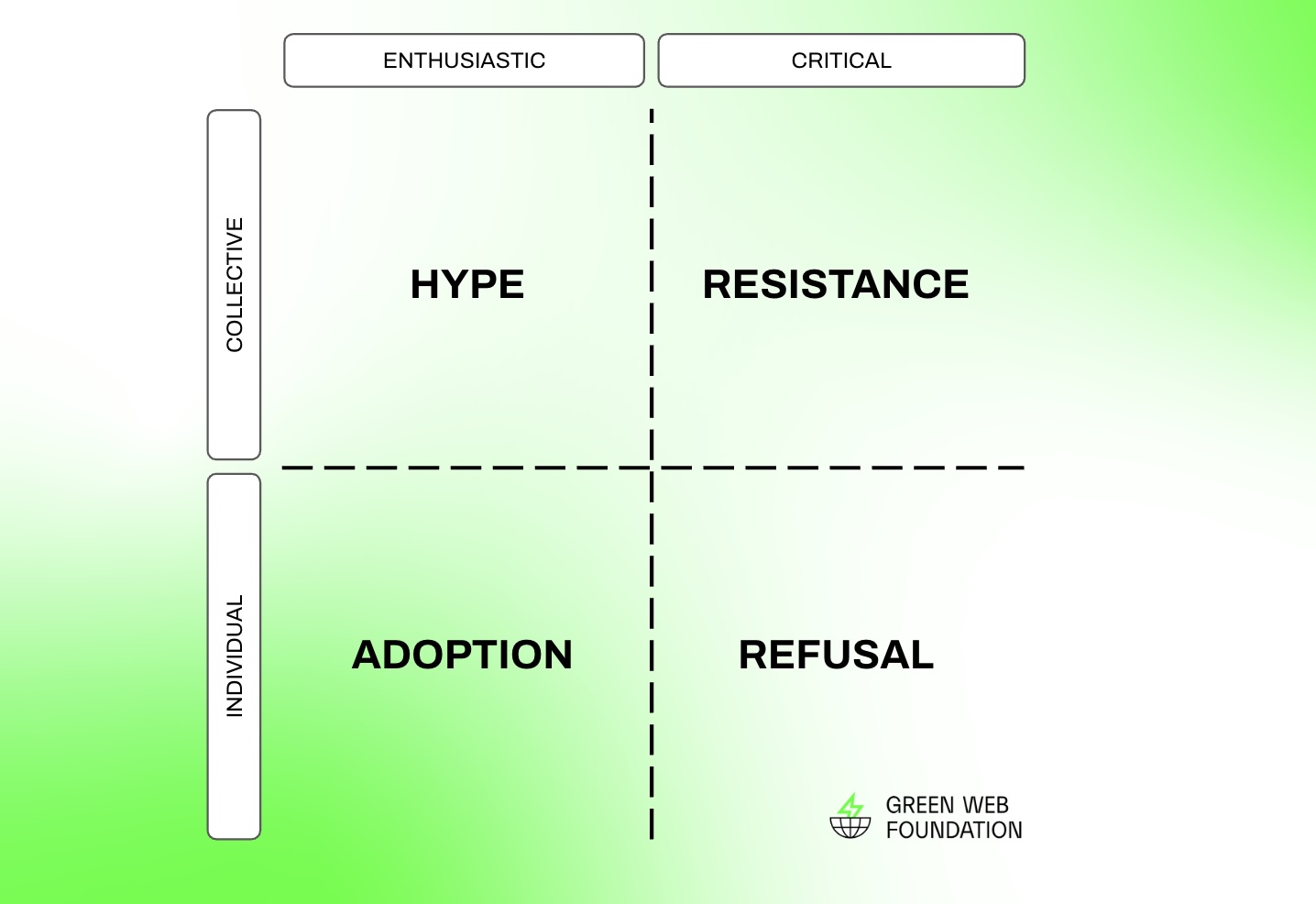

Except for a few celebrated and relatively isolated cases, up until 2025, it was fairly safe to say that carbon-aware, or grid-aware computing was an interesting, if somewhat niche idea with relatively limited adoption. During the workshop we had a chance to explore why this might be the case from a few different perspectives, and one was economics.

For grid operators

If you look at things from the point of grid operators, while there may be some longer term climate goals they have to meet, there are usually two much more immediate priorities to deal with – reliability, followed by cost. People expect lights to come on when you flick light switches, and the consequences of losing power are severe. Similarly the since 2022, the cost of power has been a politically charged topic, and the UK is known for having some of highest electricity prices in Europe. Faced with this, you need some really good reasons to rock the boat, and try something new that could risk the reliability or cost of providing power to people.

For datacentre operators

If you look at things from the point of view a datacentre operators, the economic incentives don’t look too strong either. If you are not working in a huge vertically integrated cloud giant like Facebook, Amazon, Google or Microsoft, then most of the time, you’re being paid by customers to provide very reliable infrastructure that servers can run in, because the perceived cost of downtime is far greater than the cost of any power, or rented space people pay you for.

Even if we put the downtime argument aside, for the majority of operators offering colocation services, the business model is based around renting space in racks to customers who then bring their own hardware to run inside these racks and then charging these customers for fixed power capacity. These customers frequently pay a fixed fee for capacity in megwatts, not for energy in megawatt hours, meaning there is less incentive to save energy, and they largely are not exposed to changes on the grid that affect the cost of energy on an hourly basis. Datacentre operators don’t really have a way to expose customers to these changes in a way that might change their behaviour.

This is different for the larger hyperscale datacentre operators mentioned above who frequently own the facilities and the hardware running in the racks. These organisations buy energy at a scale where changes to conditions on the grid do show up in the cost they pay. This helps partly explain why these companies have been more proactive with carbon-aware or grid-aware software, but to understand why even these players have been relatively slow to adopt demand flexibility in datacentres in software design, it helps to understand how the economics look to people offering cloud and AI services as well.

For people operating hardware for cloud and AI services

Even before AI became the force driving the buildout of new datacentres, selling cloud services was a quite high margin business, where the services like virtual servers, hosted databases were sold at a high enough markup for the cost of the electricity used to provide the services to be a relatively low share of the cost.

Instead, the cost of hardware made up a relatively large share of costs -and over its lifetime, the more hours of active ‘billable’ use you can sell, the faster you recoup the costs of buying the hardware in the first place, and the more profitable your service will be. This dynamic acts like a disincentive against demand flexibility in software. A lot of the time, any savings you might make from scaling back computation by using less energy when its cost is high would be outweighed by the cost of lost revenue from selling fewer hours of services at a substantial markup.

With a shift to AI services, this dynamic has intensified. AI accelerated hardware might use more energy, but relatively speaking, the cost of hardware is even higher. This means the only way to cover the cost of new AI servers you paid top dollar for is to use them as much as possible – either selling as many hours of service as possible, or otherwise using them as much as possible to create the next AI model you can sell access to.

To put some numbers on this, industry analysts for the outlet SemiAnalysis say that for AI and cloud services, every megawatt of datacentre capacity in operation can bring in between 10 and 12 million US dollars in revenue. There are 8,760 hours in a year, so and rounded to the nearest hundred dollars, this means for every megawatt hour of power you pay for, you’re looking at bringing in around $1,400 of revenue.

When the cost of energy as an input typically ranges between 50 and 150 US dollars per megawatt hour, it’s easy to see why it makes more sense to sell the extra hours of services, rather than rely on changes in the cost of energy to improve the economics of operating a service. This also applies even when you have an abundance of clean cheap power on the grid – if you’re already incentivised to sell as many hours of computation as possible, you don’t really have much scope to scale up the services you offer further as you’re already working your hardware as hard as you possibly can.

Where the economics of demand flexibility do work in favour of datacentres

In the face of this, you might ask – if this is all true, why the fuss about demand flexibility for datacentres at all?

In the second half of 2025, one use case for demand flexibility in datacentres has captured the attention of industry and policymakers alike, and to understand it, you need to understand that most of the time, most electricity grids are running at nowhere near their maximum peak capacity.

While utilisation of the grid changes over the course of the day, broadly speaking, over a year, the maximum capacity of the grid is only required for a tiny share of the time – on the order of tens or a low hundreds of hours at a maximum.

We have established that new datacentres, particularly the new generation of AI datacentres, are prodigious energy consumers, and until recently the received wisdom was that even though most of the year the electricity grid could accommodate their power demands, for those handfuls of hours during the year when the grid was close to capacity, their demand would exceed the capacity on the grid – it would make the peaks ‘peakier’ and risk the stability of the grid for everyone. This meant that they wouldn’t be granted a grid connection until new updates were carried out by grid operators, and new supply was available to match this newer, ‘peakier’ peak demand in the system.

However, a report in the first half of the year, Rethinking Load Growth: Assessing the Potential for Integration of Large Flexible Loads in US Power Systems, put forward a new idea. If a datacentre could voluntarily scale back the demand it placed on the electricity grid during that small share of the year when the grid was under stress, in theory, it would mean that this datacentre project could be granted a connection onto the main grid sooner, without needing wait for future upgrades, or even new grid-level power generation. From a climate point of view this also sounds promising, because most of the the time, the new generation used to meet these times of peak demand would be new gas turbines which may be fast to respond, but are inefficient, and cause a lot of carbon pollution when generating this extra energy required by the grid.

This has an effect on the economics too. For large datacentres, if an operator thinks they can sell all of the capacity they have, then suddenly demand flexibility makes a lot more sense for their project. If you have a 200 megawatt datacentre project waiting in a queue for a grid connection, and you think, as industry analysts tell you, and as we explored above, that every megawatt you have on the grid can bring in between 10 and 12 million US dollars of revenue per year, then this means getting a grid connection for your project even six months earlier might mean a billion extra dollars in revenue. This makes it much easier to justify investing in demand flexibility.

From the policy maker’s point of view this is great too – if you’ve been told that new datacentres are your best hope to stay nationally competitive, then suddenly you have new way to deliver new levels of economic growth you were counting on.

And as a grid operator this sounds good too – you’re no longer the bottleneck everyone is complaining about, and you don’t even need to wait to build new energy generation.

But again, the goal of kind of demand flexibility is different to the one we introduced in the beginning of this post – it’s not about taking the same level of computation and making changes to when or where it runs to reduce its overall impact.

Instead, it’s more a case of using demand flexibility to increase the total amount of computation that takes place without needing new investments in peak capacity on the grid, where the mechanism at play is increased utilisation of the underlying electricity grid.

In isolation, this sounds good – on a national grid where most of the power comes from clean, non-fossil forms of electricity generation like wind and solar, this might even mean that the average carbon intensity of power used falls, but in absolute terms the total amount of energy consumed may have risen. As long as a grid is partially fossil powered, this can still mean an absolute rise in carbon pollution, which for many people is the opposite outcome desired through grid-aware or carbon-aware computing.

So which is it?

Right now, it’s not clear, but we know there are certain steps you can take to make one outcome more likely than another, and elsewhere we’ve written about how the falling cost of clean energy generation can mean new alternatives to reaching for gas all the time.

We’ll post future updates about the workshop outputs as they become available, but in the meantime, for those who can respond, that UK consultation is now open, and we’ll keep sharing what we know and what learn to make reaching a fossil free internet possible. If you want collaborate with us on this topic, the fastest way to do so is get in touch via our support form.